Seaching for Wisdom

Monday, March 16, 2015

Oldest Registered .COM Domains and where they are now

Monday, March 14, 2011

Some Israeli Music

Here's a quick list of some Israeli music on YouTube that I've liked over the years, and continue to like.

One of the first Israeli Rock/Pop groups the High Windows with Arik Einstein, Shmulik Krauss, and Josie Katz. From their first and only album, The High Windows.

You Can't and First Love

Next for Arik, The Churchills...pretty much a copy of the Beatles, but toned down a bit.

Achinoam doesn't know

From Lul, a classic comedy series with Arik Einstein, Uri Zohar and others around 1972.

I like to Sleep

This is one of my favorites, also from Lul.

Why do I take to Heart, Arik Einstein and a very young Shlomo Hanoch.

A relatively recent group. Great pictures in the video, though.

Ethnix, She won't come back.

Yehuda Poliker songs. He's of Greek heritage, worth learning about his family history. Early music (Benzine) was straight on Israeli Rock. Later he let the Greek influences out.

Of course, there's always Aviv Geffen. I never really got his goth look in the early days, well, still don't, but with his parentage you knew he could do something like this. Cry for You

Wednesday, July 21, 2010

Finally, A New Posting---Systems

It's been a long time since I last did a blog posting. Partially, this is because I've been working with a different company for the last year and it was hard to post anything interesting that didn't impinge on their proprietary interests. That's still true to some degree, but a lot of what I've seen with respect to system design there is still relevant. Of course, the other motivation is that the company I'm working for is ITA Software, which Google recently announced they were acquiring. So, if all goes well, sometime in the not too distant future this I'll be a Google employee.

So, let me start with ITA's products, some of their differences, and how not to learn form success. ITA's original product is a shopping and pricing product that is used on many web sites to find the flight combination for an itinerary with the best price. This is a lot harder problem then I expected, largely because airlines are really clever at controlling their prices to get the most money out of travelers on each flight. And as if that wasn't hard enough there are all those extra fees that need to be included (and taxed) in order to get the actual price of the tickets. And for international flights that cross multiple borders, each with their own currencies, tax structures and conversion rates. Just figuring out the price to charge for a coke on a flight is daunting.

But the ITA people are really smart, they work really hard, and they figured out how to do all of that, how to it all very fast, accurately, and reliably. This problem, while very complex to get right and efficient, is at its core very simple. It is a special filtered search in a very, very large graph which is never actually generated. One way to think about the problem is that is like looking for the way out of a large maze. At each corner you have 2 or 3 possible choices and the complete graph of all possible choices could be enormously large. You can't generate the whole graph and local optimums won't get you out of the maze. Sounds like an Artificial Intelligence problem doesn't it? Would you be surprised that our CEO comes the AI lab at MIT?

Next time, I'll talk a bit about the project I'm working on, and how it differs.

Tuesday, January 26, 2010

Keep the Candle Burning

I wasn’t at Sun for very long, but like most people that worked there, it made a quite an impression on me. Despite decades teaching engineering at universities, consulting, and working in industry, it was at Sun that I learned what it meant to be an engineer and how to celebrate success. Scott summed it up best: “kick butt and have fun”.

So, I’m disappointed in Jonathan’s farewell message as reported in the Register: “Go home, light a candle, and let go of the expectations and assumptions that defined Sun as a workplace. Honor and remember them, but let them go.”

Honor those expectations and assumptions for sure, but never ever let them go. Those characteristics made Sun great and will make other companies and other engineers great as well.

Light that candle and let it show you the way. And keep it burning so others may also benefit.

Thank you Scott.

Thursday, March 26, 2009

Project Caroline

Here is a pointed to that presentation.

It begins with my introduction and motivation for the project. At 5:30, John McClain gives a demonstration of the use of Project Caroline to create an Internet service, creating a Facebook application. At 34:00, Bob Scheifler gives a developer oriented view of the resources available in the Project Caroline platform and how those resources are programmatically controlled. At 1:06:00, Vinod Johnson demonstrates how the Project Caroline architecture and API's facilitate the creation and deployment of horizontally scaled applications that dynamically adjust their resource utilization. This is done using a early version of the Glassfish 3.0 application server that has been modified to work with Project Caroline. Finally, at 1:33:30, John McClain returns to summarize the demos and the answer a few questions.

While the project is no longer active at Sun, the Project Caroline web site is still running, where you can find additional technical discussions and through which can download all of the source code for Project Caroline. It's worth noting that that web site is a Drupal managed web site that itself is running on Project Caroline!

Wednesday, March 4, 2009

The Data Center Layer Cake

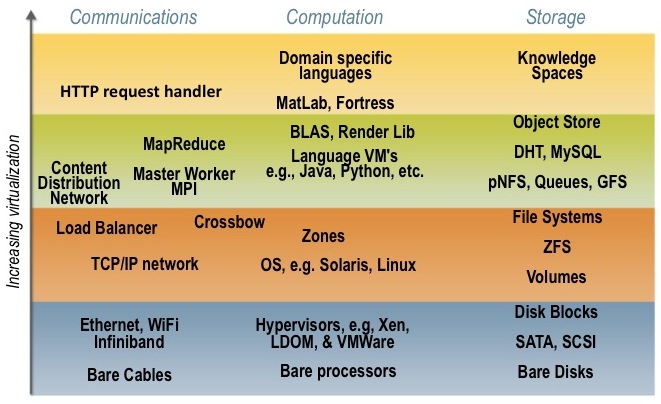

The vertical axis corresponds to the level of virtualization or abstraction at which that technology area is expressed. For instance, computation could be expressed as specific hardware (a server or processor), as a virtualized processor using a hypervisor like Xen, VMWare, or LDOMs, as a process in supported by an operating system, a language level VM (like the Java VM), etc. As you can see, the computing community has built a rich array of technology abstractions over the years, of which only a small fraction are illustrated.

The purpose of these virtualization or abstraction points is to provide clean, well defined boundaries between the developers and those that create and manage the IT infrastructure for the applications and services created by the developers. While all developers claim to need complete control of the resources in the data center, and all data center operators claim complete control over the software deployed and managed in the data center, in reality a boundary is usually defined between the developer domain and the domains of the data center operator.

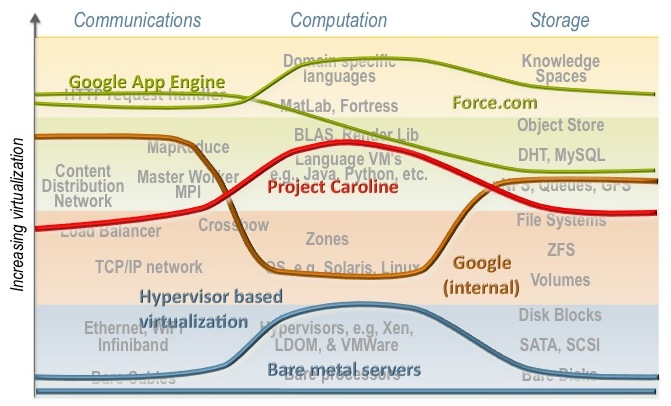

On the following diagram we have overlaid rough charactures of some of these boundaries. Higher the line is, the room the DC operator has to innovate (e.g., in choosing types of disks, networking, or processors), and smaller the developer's job in actually building the desired service or application.

At the bottom of the diagram we have the traditional data center, where the developer spec's the hardware and the DC operator merely cables it up. Obviously, this gives the developers the most flexibility to achieve "maximum" performance; but it makes the DC operators job a nightmare. The next line up corresponds, more or less, to the early days of EC2 or VMWare where developers could launch VM's, but these VM's talked to virtual NIC's and virtual SAS disks. It is incredibly challenging to connect a distributed file system or content distribution network to a VM which doesn't even have an OS attached.

The brown line corresponds to what we surmise to be the internal platform used by Google developers. For compute, they develop to the Linux platform; for storage distributed file systems like GFS and distributed structured storage like Bigtable and Chubby are used. I suspect that most developers don't really interact with TCP/IP but instead use distributed control structures like MapReduce.

My team's Project Caroline is represented by the red line. We used language specific processes as the compute abstraction, completely hiding the underlining operating system used on the servers. This allowed us to leave the instruction set choice to the data center operator.

The green lines correspond to two even high level platforms, Google App Engine and Force.com. Note that these platforms make it very easy to build specific types of applications and services, but not all types. Building a general purpose search engine on Force.com or Google App Engine would be quite difficult, while straightforward with Project Caroline or the internal Google platform.

The key question for developers is, Which line to choose? If you are building a highly scalable search/advertising engine then both the green and blue lines would lead to costly solutions, either in the development, management, or both. But if you are building a CRM application then Force.com is the obvious choice. If you have lots of legacy software that requires specific hardware the the hypervisor approach makes the most sense. However, if you are building a multiplayer online game, then none these lines might be optimal. Instead, the line corresponding to Project Darkstar would probably be the best choice.

Regardless, the definition of this line is usually a good place to begin in a large scale project. It is the basic contract between the developers and the IT infrastructure managers.

Addendum:

The above discussion oversimplifies a number of issues to make the general principles clearer. Many business issues like partner relationships, billing, needs for specialized hardware, etc. impinge on the simplicity of this "layer cake" model.

Also, note that Amazon's EC2 offering hasn't been static, but has grown a number of higher level abstractions (elastic storage, content distribution, queues, etc.) that allow the developer to choose different lines than the blue hypervisor one and still use AWS. We find the rapid growth and use of these new technologies encouraging as they flesh out the viable virtualization points in the layer cake.

Wednesday, February 25, 2009

Cloud Computing Value Propositions

In a recent paper published by researchers from the RADLab at UC Berkeley, Above the Clouds: A Berkeley View of Cloud Computing three new hardware aspects of Cloud Computing are suggested:

- The illusion of infinite computing resources available on demand

- The elimination of an up-front commitment by Cloud users.

- The ability to pay for use of computer resources as needed,

But these are all just variants of "capital expenditure (CAPEX) to operating costs (OPEX) conversion." And in other contexts, it goes by the slightly jaundiced term outsourcing. We've been doing this for years in IT, but the refined techniques developed by Amazon, Google, and others has made finer grain outsourcing practical.

While these benefits are valuable and lower the barrier to entry for many startups and small organizations, it shares the same pitfalls of outsourced manufacturing or design.

I believe there is more value to Computing Computing than just new way to outsource. Cloud Computing delivers a far more unique value of enabling business agility.

Those who have benefited from Cloud Computing, like Google and Salesforce.com have done so because their developers have a much richer platform to work with than is traditional. They don't need to configure operating systems or databases, they don't need to port new packages to their environment and painfully identify conflicting interdependencies. Instead, they have a relatively comprehensive platform to develop to, which provides distributed data store, data mining, load balancing, etc. without any development or configuration on their part.

It is this rich platform, which spans computing, storage, and communications, that allows Google to rapidly respond to business changes and market sentiment, and run more agilely than their competitors.

The original EC2/S3 offering effectively delivered on the outsourcing business value, but what has made Amazon Web Services compelling today is the rich platform that an AWS developer has at their disposal: load balancing from Right Scale, the Hadoop infrastructure from Cloudera, Amazon's content distribution network, etc. So, I believe that a real challenge to the success of Cloud Computoing is determining the characteristics of a "platform", that provides the greatest value for Cloud Computing developers. Too high a level of abstraction, e.g., Force.com narrows the application range.

Too low a level, and you are just outsourcing your IT hardware.